As the CTO for Staffware Plc, Jon was responsible for a team of 70 people, geographically split into two countries (USA and the UK) and four locations. Jon's primary responsibility was directing the product development cycle. Furthermore, Jon had overall executive responsibility for the product strategy, positioning, public speaking etc. Finally, as a main board director he was heavily involved in PLC board activities including merges and acquisitions, corporate governance, and board director of several subsidiaries. Jon joined Staffware in 1992 as the Technical Director. At that time Staffware was a privately held company employing approximately 18 people with an annual turnover of £1.2 million – during Jon's tenure with the company, it was taken public by floating on the London Alternative Investment Market (AIM) in 1995 followed by a full listing on the London Stock Exchange in 2000. The company has achieved year on year growth of more than 50% (CAGR) and now employs some 350 people in 16 countries with £40 million turn over. Profitable every year. Jon was personally responsible for the re-design of the product from a Unix only “green screen” system supporting around 20 users to a full client server 3-tiered application supporting many thousands of users. Furthermore Jon personally designed the world’s first full Java workflow client and designed and implemented the "eProcess" initiatives. When Staffware acquired a CRM company in the USA Jon was appointed Chairman of Staffware eCRM Inc. until the integration was complete. A Significant amount of Jon's time is spent giving high level presentations to the boards of potential customers (both business and technical) as well as business and technical partners. Jon is recognized as an excellent public speaker.

As a Director of the International Process and Performance Institute (IPAPI) I am helping to lead the way in the improvement approach on process for personal, organizational and customer success. To achieve that I have worked with the rest of the Institute's team to develop the IPAPI CEM Method™ (the de facto standard for delivering sustainable business process optimization and improvement), the theory and practice behind the 21st Century Value Chain, the Process Innovation Landscape, and the Certified Process Professional Program.

David Wiltz has a Bachelor of Journalism degree from University of Texas, Austin. He has written numerous articles for newsletters and newspapers including the Dallas Morning News. Currently a consultant with SIBRIDGE Consulting, David has more than 25 years of experience in IT in the roles of Manager, Application Architect, Enterprise Architect, Business Analyst, Mentor and Developer. His major consulting focus is helping companies more effectively utilize Enterprise Architecture concepts and deliverables for operational improvement, cost savings and strategic enablement. With this focus, a major percentage of engagements involve process improvement and/or re-engineering and mapping IT systems to business processes. David also assists clients with improving their systems development processes, especially the up front steps of taking business strategy and objectives and transforming those into projects, requirements and architectural solutions. |

| Written by Terry Schurter |

From it’s beginnings in the early 90’s to the boom in the early 00’s – where is BPM at in 2009? From it’s beginnings in the early 90’s to the boom in the early 00’s – where is BPM at in 2009?While BPM stands for “Business Process Management” the definitions of Business Process Management in use in the marketplace have dramatic differences in their meanings. So the term itself does not define a specific problem, approach or set of activities. That definition comes from the person or organization using the term as they place “BPM” within the context that they care about. The biggest disparity exists between the commercial software market and the business pundits who define BPM in completely different ways. On a historical note, BPM did indeed start as a management practice, coming directly out of the more experienced practitioners of Business Process Reengineering. From these people, Business Process Management was originally conceived of as a way to better manage the business operations of large commercial entities. Early entrants into the BPM software market created new “work flow” tools that often required little code development to express “business processes” heavy with human interaction. The belief within the early BPM software community was that by providing business users a simple set of tools with which they could model these human-centric processes, the processes could then be “orchestrated,” dramatically improving the process efficiency and quality for these business operations. Early adopters of these products often applied them to a unique subset of business processes indeed heavy with human interaction, where little technology was in place, and where consistency in the process was poor at best. The case studies that arose from using BPM software on these processes were very, very compelling. As the market began to gain widespread attention and mainstream media coverage the larger software vendors began to eye the market with interest. Between acquisitions, product development and repositioning of existing products a fundamental shift started to take place. That shift is almost complete now. What was the shift? The BPM market shift is the move from the original definition of business processes to one that focuses almost exclusively on IT systems and IT operations. The shift occurred for several reasons. The most important reason is that the BPM tools in the market, at least those with work flow engines, were found to be ill suited for most human-centric processes. They weren’t the right tools and they still are not the right tools. If we have learned anything, we have learned that BPMS products are actually the wrong tool to use for improving business processes heavy with human interaction. Now if you’re a software vendor what are you going to do? Focus on where you can be successful, where you can sell your products, and just make sure your “story” is properly prepared for your audience. This is exactly what has happened and the messaging from this market segment has purposely redefined the term “Business Process Management” on a broad basis to fit what is effectively nothing more (or less) than the next iteration of Application Integration software and intimately linked to another IT-centric concept – Service Oriented Architecture (SOA). This is where the vast majority of vendors and market share in the BPMS market exists today, and is the basis for revenue, market growth and market predictions cited by industry analysts. The one hold-out in the software market is Business Process Analysis software. Many of these products still provide value-added capabilities for improving human-centric business processes although the technology is used by people to identify opportunities for improvement - not to implement the improvements or perform work flow functions. Those “business” business processes have been left to the Business Process Analysis software market and even more so to the business consulting market. This is reflected in the commercial market break-down as follows: BPM Commercial Market Segments 1) Executable BPM software: Software that is executable, meaning it moves data (in one of a number of forms) from interaction to interaction (between any combination of systems and people). 2) Non-Executable BPM software: Software that is used to plan, manage, architect, analyze and visualize “processes.” 3) Technical Consulting services: Services that design, manage, implement and maintain executable BPM software and/or other software to achieve a similar result. 4) Process Consulting services: Services that help organizations address problems from a “process” perspective; deal with the change requirements associated with “business” changes, and lead improvement initiatives. BPM Focus Areas The focus areas of each of these segments in the commercial BPM market sheds much light on how the different problems that people face are fulfilled by different segments of the BPM commercial market. In fact, it is the problem area commonly dealt with by each of the market segments that tells us what we should expect to “get” from expenditures in each one. Knowing what problem we are attempting to solve, what value we will receive from that, and what problems we aren’t going to solve is a critical milestone in the evaluation process. Organizations that fail to perform this analysis have a far greater likelihood of suffering disappointment, failure to reach goals, disillusionment and an ongoing sense of frustration. To date there has been no significant increase in proper leading analysis of BPM even though the market is more mature and process initiatives are more likely to successfully complete. While the commercial market overall continues to grow at a steady pace, there is a general frustration from “insiders” – at least within the Executable BPM software and Technical Consulting services segments – that the market just won’t seem to “pop.” The majority of third-party analytical and research markets continue to predict a sharp increase in BPM market growth. Again, it is the focus areas that help us bring this into perspective and highlights the factors inhibiting market “explosion.” Systems-based Cost Reduction (Integration) Commercial Market Segments: Executable BPM software, Non-Executable BPM software, Technical Consulting services The systems-based approach to cost reduction is based on the perspective of the technology in use behind the processes in our organizations. In this approach to BPM cost reduction, the processes in the organization are looked at from the perspective of the technology that supports them and the role the process serves. Then the process is “moved” to a more organized, streamlined and updated process model to reduce the operating cost of the process. Cost reduction benefits from systems-based BPM initiatives come from a mixture of automation, process streamlining, error reduction, elimination of duplicity, and better control over processes at both the work level (people doing work in the process) and management level. Return on Investment (ROI) in systems-based BPM cost reduction is more often than not a workload reduction. It is extremely important that we understand this result, as in and of itself workload reduction does not translate directly into tangible ROI. Workload reduction potentially increases capacity and it frees up resources for other work to be done but in most cases is does not reduce the actual operating costs of the business in any significant way. That gain is usually only achieved when the reduction in workload (i.e. resources now available for doing other things without increasing costs) can be used to accomplish something else of financial benefit to the organization. In most cases organizations taking the systems-based BPM approach are making this linkage at the departmental level – or not at all – limiting the real benefit the organization realizes from the BPM initiative. Software companies that provide configurable applications with embedded processes in them (like ERP, CRM, etc) and BPMS software products are also focused on this form of cost reduction. System-based cost reduction approaches the opportunity of cost reduction very similar to the approach taken in manufacturing. In manufacturing we make things. Those things are made using machines, and the act of making them is a process. Anything we can do that reduces our cost in making our “things” is an improvement – as long as it doesn’t reduce quality and it doesn’t create new costs. The Executable BPM market is now predominately focused on this problem-solution. This is why Executable BPM software is more often used in back-end systems, IT, HR and customer service areas. Back-end systems and IT are really the same problem. If you think about the plumbing, electricity, networking, communication, etc. for homes as the home’s “back-end” these services have to be there and they need to work right - often times better than they do now. In our businesses, this also includes many of the “appliances “we have in our organizations including computers and the programs we use on those computers every day. This makes a lot of sense. Back-end systems and IT “processes” are already within the ownership domain of the people who approve such software purchases. HR processes are usually either not well understood to start with or generally not very well organized. HR and other indirect process areas that do not handle the finances of the company are generally perceived as “necessary overhead” without being of “critical dependence” in the successful operation of the organization. The low risk and perceived benefit arising from improving these areas is very hard for many managers to resist. However, benefits from these initiatives have rarely stood the test of measurable ROI as a financial impact and often times the initiatives run into change issues that compromise the original goal. Because Customer Service is looked at by most companies as a Cost Center, there is a very strong desire to use less expensive labor, reduce inefficiencies wherever possible, and automate pretty much anything that can be automated – even if it shouldn’t be. This is the reason why customer service is often targeted in BPM initiatives although it is not a “back-end” systems issue. The vast majority of implementations in customer service where costs are reduced have the knock-on effect of reduced Customer Satisfaction – due to the emphasis on internal measures. Process-Based Cost Reduction (Change) Commercial Market Segments: Non-Executable BPM software, Process Consulting services When the focus moves to changing how work gets done in the organization the commercial market immediately moves away from the IT professionals directly into the arena of Business Analysts and Business Consultants. To help place this into perspective it should be noted that the process-based cost reduction focus is where we find the various improvement methodologies such as Six Sigma, Lean, TQM, CEM, Change Management, and so on. While these process initiatives were originally at the center of the “bulls-eye” for the Executable BPM software market segment such is no longer the case for the reasons already discussed. There are also indications of a new BPM market beginning to form that attempts to directly address the problems associated with managing and changing human-centric processes. That market will be covered at the end of this report. Process-based cost reduction seeks to improve existing “processes” by refining how work gets done in the organization. This is why Six Sigma and Lean have quickly been “crossed over” into the process market as these improvement methods or philosophies are specifically intended to help organizations optimize existing operations heavy with human interaction. However, this market has also fallen dramatically short of its perceived value creation. The problem again comes from the fact that like BPMS products; Six Sigma, Lean, TQM, etc. are based on taking the “AS IS” process and refining it to an improved design of the “TO BE” process definition. The reason why this approach is so limited is that in general the philosophical approach for these types of techniques accepts the basic process shape (the AS IS) as valid, seeking to refine the process shape within the current constrained model of the process. Change Management and CEM are the two areas where this is not the case. In these approaches the incoming perspective is based on the idea that there are fundamental changes that must be made to bring business processes into alignment with the current environment. The assumption within these approaches is that how work gets done now is based on assumptions that may have be relevant at some time in the past but are no longer relevant to how we need to do business today. Change management tends to look at this from an isolated perspective that assumes succeeding in bringing processes into relevance is a complicated and lengthy endeavor that is performed periodically to “update” the organization to the modern paradigm. Approaches like CEM look at the need to change on a regular basis in very short time frames with the idea that the “approach” becomes an ongoing behavior of change in step with the changes in context as they occur. The other impact of significance is the issue of AS IS process complexity, where understanding the “model” requires a high level of attention and focus, leaving little to the challenging of the process as a holistic entity (like the adage that sometimes we can’t see the forest for the trees). Even though Change Management does seek to challenge the “status quo” it traditional falls prey to the complexity issue. CEM is the only practice in the market that deals directly with the issue of complexity. No Bridge for the “GAP” Much has been said about the need to bridge the gap between business interests and the application of technology. Business Process Management was originally believed to be a means to do just that, along with the cost reduction and quality improvements expected from it. That has not happened – not at all. While in the early days of the BPM software market there was significant effort placed on helping to address the “gap” issue, the movement by the majority of the BPM market to primarily Application Integration has dissolved any gains made by early market leaders. Now, rather than being a bridge across the business-technology gap, for most of the Business Process Management software market BPM tools have become part of the gap - and a contributor to the dysfunctional affects that having the “gap” imposes on the organization. One of the most obvious movements in this regard is the now broad adherence to industry standards and best practices that have arisen purely out of the logical, rational, and structured approach required in the use and application of technology. What has now become for many the very “fundamentals” of Business Process Management are completely ignored by the business people on the other side of the gap due to the lack of relevance to their perspective, work activities, interests and concerns. Another part of the change in the BPM market is the number of people involved in propagating certain beliefs. Because the software market and the adjunct markets it has spawned off (media, research, conferences, etc.) are extremely well financed there has been a tremendous amount of money spent in developing a large consensus of opinion in regards to a “BPM Market Message.” This consensus echoes the technology viewpoint and has caused many business people to back away from any technology associated with Business Process Management. The simple fact is that the gap between business and technology is now wider than it was 10 years ago and growing. More and more business people are turning a deaf ear to any discussion around BPM that includes technology. The commercial market has solidified on the split, with one side serving IT interests and the other side serving business interests. BPM as a commercial offering is no longer part of the solution to “bridging the gap.” Depending on the type of product or service consumed from within the BPM market, Business Process Management is either addressing Business Issues or Technology issues – not the disparity between the two. Lessons Learned There is, however, what could be the beginnings of a silver lining in the BPM clouds beginning to emerge. From lessons learned in attempting to address business issues and bridging the business-technology gap certain things have been learned, and some steps to address these issues have begun to emerge in the software market. 1) Lessons Learned – The Need for Individual Process Orchestration What have we learned? We have learned that business activities do not conform to the traditional work flow technology paradigm. What really happens is that the “processes” business people are engaged have a high degree of individual variance and adjustment to contextual influence that affects how work gets done on a daily basis. We now know that in general business processes (as the concept was originally defined) are fraught with the high degree of variability that occurs anytime people are the primary performers in the actions of a process. Therefore any technology used to support these processes must also have the ability to flex and change on an as needed basis. This includes the ability of supporting technology to flex and change per individual as they are doing their work – enabling people to “orchestrate” their own behavior in the process. We also know that for this kind of individual process orchestration to work within supporting technology the individual orchestration cannot impose additional “work” on the individual. This means that supporting software for these business processes must grant individual users the ability to “do” their individual process orchestration by simply doing their work their own way. Several niche players in the BPM software market now offer this ability in at least some form within their products, primarily from vendors that are focused on solving specific business issues faced by their target market. 2) Lessons Learned – Work Flow Queues are Extremely Limited One of the common approaches in BPMS products is to build work flow models of processes and then present the work each person is supposed to “do” in a work queue interface. While this works well when the general concept of a task list is already part of the existing work metaphor it does not work well at all when the task list metaphor is not already in place. What we have learned is that presenting new software interfaces to people in general creates a change issue that must be dealt with. When changing an existing interface from a design that is difficult to use and unwieldy for the people it serves - a simple, well designed and more useful interface will present a change impact that is very low. When changing from an existing interface to a new one that is not obviously simpler and easier to use (to the people using it) the change impact increases dramatically. Basically, when we change these interfaces in a way that the people using them immediately can see the benefit, the change impact is very low. As soon as we expect people to learn something “new” before the “benefit” becomes noticeable the change impact becomes significant. Where no task list metaphor exists, imposing a new interface on the people doing the work represents a very high change impact. Again, if the benefit to the people who must use the new interface is obvious to them then the change impact becomes low but if that benefit is not obvious the challenge in getting people to make the change will be very high, very costly, and is highly likely to significantly reduce the benefit expected from the implementation of the underlying technology. What we now know is that somehow Business Process Management technology for business users must move away from the general concept of “work queues” and “work queue interfaces.” What business users need is something that works within their current technology comfort zone to help them stay “in sync” with their work in a way that does not impose new activities on them. Because this can take many different forms and be supported through many existing technologies already in use by the business the means by which this is accomplished is highly variable. Numerous BPM vendors have approached this as an extension of their basic concepts of queues and work flow with interfaces into email, email clients, and mobile devices but there is still a long way to go in really solving this problem. 3) Lessons Learned – The Need to “Capture” Reality As discussed earlier, the processes business users engage in are highly personalized and variant. When we “solve” the issue of how to enable them to engage with underlying BPM technology in a way that allows them to self-orchestrate their behavior in a process (without thinking about it that way) we will have the opportunity to start capturing behaviors of our people that we can learn from to improve our overall business operations. This is actually brushing against something entirely new in the world of business software. Rather than embedding “domain knowledge” into a process so that we can impose “best practice” on everyone in our organization there is the potential to capture significant behavioral information that can be related to both context and results. This behavioral information related to context and results represents a wealth of learning we can tap to improve our organizations. Why did one sales person achieve such high results in a given market during a time when others did not? Why does one department, shift or group get numerous complements from our customers when others don’t? Why are certain people always on time with their work when others are not? These types of questions can start to be answered when we reach the place where our underlying BPM software is capturing individual behavioral nuance within context and in connection with results. Again, in a few cases there are BPM software products that have touched on these kinds of benefits but only at an extremely simplified level. The problem is complex, because to actually capture this kind of information the underlying BPM software must effectively be “unnoticed” by the user. This represents a fundamental shift in how we think about BPM software although it is the future of the product genre - though it may well be called something entirely different to help distinguish it from the Application Integration message dominant in the commercial market today. 4) Lessons Learned – Unstructured or Free-forming Processes We have also learned that a number of our highest value processes are really ad hoc processes that form within a known framework of relationships. These processes do not have a predetermined start and end point, they form where they are needed, when they are needed and exist until they complete. For example, a new product offering may start in a number of places such as product development, marketing, research, strategy or executive planning sessions. Depending on what the new product is and how it relates to the other products of the company the “process” may involve any number of functions, departments and activities many of which can be performed in parallel. From a business perspective what we want is for these processes to operate as smoothly as possible, with timely communication, information sharing and event awareness. Because the relationships behind these ad hoc processes can be identified there can also be structure placed behind these kinds of processes that simplifies both the creation and execution of these ad hoc processes. The challenge though is that these types of processes do not fit the classic BPM work flow perspective of process and are ill suited to BPMS products. While there are a number of interesting technology twists that can help address this issue already in the market they are seldom even given the label of “BPM” and generally do not address all of the challenging aspects these kinds of processes create for the organization. 5) Lessons Learned – The Customer is Nowhere in Sight The final lesson learned is that the BPM market (except for CEM) has abandoned the customer. What is meant by this is that those processes which represent what the customer experiences (the processes that are the customer experience) are not being dealt with by any of the commercial BPM market segments as end-to-end customer processes. What we have learned is that these customer processes – the end-to-end processes that are what the customer experiences – are the relationship with the customer. It’s hard to believe that anyone would not consider these processes as the most important processes of the organization. Yet very few organizations have actually documented these end-to-end customer experience processes and even fewer have taken the steps to own them by shaping these processes to produce a known and desired experience for the customer that is specifically optimized to make the customer’s life simpler, easier and more successful. Considering that these experiences are the business – from the customer’s point of view – and that these processes directly impact our ability to grow customer loyalty and expand the customer relationship it seems obvious that these processes would be first and foremost on our agenda for Business Process Management. That is not the case in the BPM commercial market with the exception of CEM and specific one-off consultancy practices. BPM Practice Guidance While this report exposes a number of misconceptions about the BPM market including a significant amount of industry hype that is being used to promote sales, it also recognizes the value of each segment of the BPM commercial market. The use of Executable BPM products is appropriate for Application Integration and existing practices that include the concept of “job-tickets” and “to do lists.” It can also create value in some cases where an existing process is very costly due to poor management that has failed to successfully address a known and costly issue in the organization. Non-executable BPM software can certainly be used to document existing processes and to help improve them just as professional services can using any of the techniques available in that market. Some Executable BPM products are focused on improving repeatable processes that truly are business processes. While these products are likely to create real value when applied in this way they are still limited to a pre-defined way of thinking about the process and their value creation potential is tightly constrained. Executable BPM products can play a value-add role in agility (this is the BPM-SOA connection technologists’ care about) if the right decisions are made as to how that systems-based agility will break down within the organization. Tying this systems-agility initiative to strategic plans of the business through explicit milestones is a very compelling way to move this technology opportunity forward successfully. Considering the economic challenges faced on a world-wide basis entering into 2009 it is imperative that decisions on BPM at every interest level in the business be considered clearly and thoughtfully. Knowing what we are using a given commercial offering for, why it will create value and what it isn’t going to do for the organization are the necessary ingredients of successful BPM strategy. Employing a BPM strategy that does not include these ingredients is foolhardy in the extreme. And in an economic slowdown like we are experiencing now that kind of mistake can literally take a business “out of the game.” About the Author: Terry Schurter is a Director of the nonprofit International Process and Performance Institute, former CEO of Bennu Group, Research Director for Process with Bloor Research and CIO of the BPM Group. An internationally recognized thought leader in Business Process Management, Performance Management, and Customer Expectation Management he received the Global Thought Leadership Award in 2007 from the 20,000+ member BPM Group. Terry has founded, co-founded and served as an executive officer for multiple technology startups, won international awards for Thought Leadership and Engineering Excellence (George Westinghouse Signature of Excellence Awards – 1990; 1991) and is an author – contributing author of several books including "Customer Expectation Management - Success without Exception". Terry Schurter Global Thought Leader Director International Process and Performance Institute www.ipapi.org www.tschurter.com |

| Written by Mukanda Mbualungu |

| BPM Architecture Considerations Introduction This paper outlines three sets of key architecture considerations required for a successful configuration of an enterprise Business Process Management (BPM) implementation and deployment. These considerations are:

In addition to architecture considerations, another important success criteria for BPM implantations is ensuring the organization’s IT group be involved in the early stages of the BPM tool selection process. This is due to the fact that many decisions made and issues uncovered in early stages will have long-term consequences and will be much more difficult to resolve after the BPM tool has already been selected. When IT is involved at the early stage, there is a much better opportunity for ensuring balance between both business and technology factors. I. Deployment Environments Although this issue may seem obvious for any software deployment, it is important nonetheless to address how the BPM architecture will be configured in order to get out of the way any preconceived ideas. Typically there are four distinct (yet related) environments that need to be configured during the course of a BPM implementation. These environments are:

There are subtle differences in the way the #3 and #4 environments above are configured. Each will have its own architecture options and sizing as discussed in the next sections.

II. Architecture Options Depending on the BPM tool selected, there multiple architecture options to be considered. Below we have identified the four most commonly used options and typically most appropriate for BPM implementation. The selection of these options for each environment depends on different factors such as existing IT infrastructure, budget, and solutions to be deployed.

III. Hardware and Database Sizing In addition to the architecture options, other considerations for architecting a BPM solution relate to calculating and estimating hardware and database sizing. These considerations are:

The considerations above relating to specific quantities directly relate to hardware performance – the more users and process instances involved, the greater the computing capacity is for the BPM Engine orchestrating the process. The benchmark results will determine the hardware (CPU, Memory and Hard Disk) and database sizing recommendations for the BPM tool.

--------------------------------------------- The author, Mukanda Mbualungu, is the Technical Director for SRA's Business Process Management Group (http://www.sra.com/bpm) |

| Written by Keith Swenson |

Reexamining the Limitations, Expectations, Capabilities and Misunderstandings of BPEL, as well as Executing BPMN Directly It seems that conventional wisdom has been for a while that "Business Process Execution Language" or "WS-BPEL4WS" is the standard for execution in the BPM space. At the same time, the majority of BPM and workflow products on the market today work successfully without using BPEL. Some say that those products that don't implement BPEL are simply dragging their feet in the mud. Others say it is not possible to do what their product does in BPEL. Whom are we to believe? It is after all, a complex subject. Recently an article was written on InfoQ which took a particular process scenario drawn in Business Process Modeling Notation (BPMN), and investigated in detail why it can not be implemented using BPEL. That process can, however, be run on a system that directly executes BPMN. This article explores exactly how this is possible, giving a step by step example of a running system executing the process in question. Since you can execute the BPMN directly, it begs the question: why translate to BPEL at all?

Finally a well considered and detailed article on the limitations of the approach to BPEL. There are a few vendors who promote BPEL as as the one-and-only-true-way to support BPM. In fact, it is good for some things, but fairly bad at a large number of other things. It is my experience that BPEL is promoted primarily by vendors who specialize in products we might rightly call “Enterprise Application Integration” (EAI). These companies have recently taking to calling their products “Business Process Management”. Potential users should be asking the question “Is BPEL appropriate for what I want to do.” In that aim, there should be a large number of articles discussing what BPEL is good for, and what it is not, but there are very few articles of this nature. The article “Why BPEL is not the holy grail for BPM” explores the promises and claims of the BPEL proponents and find some serious gaps in what BPEL delivers. It starts by citing a reference that every should know from the BPMN FAQ: “By design there are some limitations on the process topologies that can be described in BPEL, so it is possible to represent processes in BPMN that cannot be mapped to BPEL”. In my experience, fans of BPEL make the following assumptions:

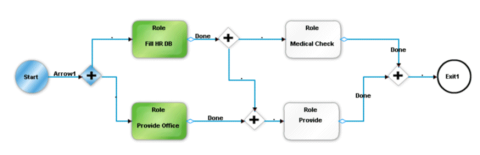

Assumptions (1) and (2) are valid in some situations; the product category that we call EAI is in fact configured primarily by programmers, and need only to send, receive, and manipulate bytes of data. So for EAI, BPEL might be a reasonable choice. But many BPM products are designed to be configured by non-programmers; by the business people themselves (and that is indeed why we call them business processes in the first place). Non-BPEL approach exist that allow non-programmers to draw up and execute processes, safely and reliably. Those processes are qualitatively different from the processes a programmer might draw up, and many people familiar with EAI-style “BPM” are incredulous, but that basically circular reasoning based on the assumption that the process designer is a programmer. To be fair, some believe that business people will draw up initial process diagrams, that will then be fixed up by programmers. But there are other situations where there is a no programmer at all, and that in those situations BPEL would be a poor choice. Assumption (3) drives people to think that since there is overwhelming “magazine” evidence that BPEL is the right standard, then anyone not supporting BPEL is simply too lazy or trying to disrupt the marketplace. Unfortunately for these people, the process world is inherently more complex than they realize; the variations among approaches are not simply the randomness of vendor whim, but in fact an appropriates response to the variations in the needs for process support. People should keep in mind real requirements: if BPEL meets the need, then fine, but don’t blindly assume that one size will necessarily fit all. We need more good articles on the situations that BPEL is, and is not, useful. And let us view with great suspicion any report that states that BPEL is unconditionally the right architecture for all processes. The article “Why BPEL is not the holy grail for BPM” presents a scenario for implementation which is difficult for BPEL based products to actually execute. It presented a particular product based on BPEL that was not able to execute this diagram. What about products that are based on executing the BPMN directly without conversion? Fujitsu Interstage BPM is such a product. You draw the BPMN diagram, and submit the diagram to the server, and the server executes it directly. It has no problem executing the diagram as drawn. Here is the diagram as drawn in the Fujitsu Studio: Some slight modifications: Instead of an “activity” receiving the start message, I use a start-event which receives the message. I don’t understand why people draw processes that start and then immediately go to an activity that waits for something — a start event does this much more clearly. There are many who feel that waiting for a message should always be done by an event node, and never an activity node, and that certainly seems to make sense to me. Using the start node to be triggered by the incoming message is the same semantics. Similarly, the original diagram had a final activity which was not really an activity This is saved as XPDL. I do not say that this is transformed to XPDL, because there is no transformation. The diagram above is expressed directly, one-for-one, into XPDL which is simply a file format for storing BPMN diagrams. When you read the XPDL back in, the same BPMN diagram as you see above is displayed. XPDL is simply used as a file format to transfer BPMN diagrams around. The XPDL file is imported into the server through a web form: Then in the server the process definition is displayed as BPMN using the Flash based process diagram viewer:

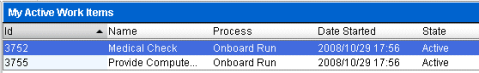

Step 1The worklist has two workitem on it, “Provide Office” and “Fill HR DB”: And this can be visualized graphically as: Because these steps are in parallel, they could be completed in either order. To demonstrate how the AND node holds up the progress until both inputs are received, lets complete the “Provide Office” first. Step 2One work item available: And this can be visualized graphically as: The the “Fill HR DB” has not been completed, and so that task is still on the list. The Provide Computer step can not be started before the Fill HR DB step is completed, because the AND node requires input from both direction. There is only one active activity (indicated with green) and so we have one workitem. The AND node is colored green here to show that it has received one, but not both inputs. We complete the “Fill HR DB” workitem: Step 3This has two workitems available again: And this can be visualized as: Completing the “Fill HR DB” sent an event to the “Medical Check” activity to activate it, as well as to the AND node, satisfying that, and causing “Provide Computer” to be activated as well. So we have two activities active at this time. Again, these are in parallel, and so they could be completed in either order, so lets choose to complete the “Medical Check” workitem. Step 4We are left with only one work item: And this can be visualized as:

Step 5Now we have completed the process, there are no workitems: And this can be visualized as: SummarySo you see, the BPMN can be executed directly. There is no need to translate into any other form. The process is transferred to the server as a BPMN diagram, using XPDL as a file format. The diagram is interpreted directly without conversion to another model. You can execute diagrams that are not possible with BPEL. And the advantage is that you can directly see how the process is running. Why again do analysts recommend BPEL? It seems to me in this scenario to be nothing but a limitation. ABOUT THE AUTHOR:Keith Swenson is Vice President of Research and Development at Fujitsu Computer Systems Corporation, as well as Chairman of the Technical Committee of Workflow Management Coalition (WfMC). He is known for having been a pioneer in web services, and has helped the development of standards such as WfMC Interface 2, OMG Workflow Interface, SWAP, Wf-XML, AWSP, WSCI, and is currently working on standards such as XPDL and ASAP. He has led efforts to develop software products to support work teams at MS2, Netscape, and Ashton Tate. He is currently the Chairman of the Technical Committee of the Workflow Management Coalition. In 2004 he was awarded the Marvin L. Manheim Award for outstanding contributions in the field of workflow. |

| Written by Terry Schurter |

This paper looks at the importance of the “processes” that are created by every organization as experienced by that organization’s customers. This paper looks at the importance of the “processes” that are created by every organization as experienced by that organization’s customers.These “customer processes” are rarely documented, designed, managed or controlled as “end-to-end” processes even though they represent our entire relationship with our customers. Lead by the insights of Richard Normann, there has evolved a way to “look” at these processes, design them, manage them, control them and innovate on them to dramatically increase perceived customer value and to move the organization “up” into a higher level customer value chain. At the heart of every customer experience are Moments of Truth. Richard Normann first articulated them; Jan Carlzon turned SAS around with them and subsequently wrote the book “Moments of Truth.” Innovation on Moments of Truth is fertile ground for building customer loyalty, expanding market share, and remaking entire market places. Sir Richard Branson of Virgin uses this type of innovation on a regular basis. The largest Tire Retailer in the US built their $1.5 billion business using it. State Farm lives and breathes it every day. What’s stopping us from doing the same? Nothing but ourselves... The innovation I am talking about is how some businesses are able to look at the experience the customer has when interacting with the business and challenge it. They challenge the shape of that interaction at a fundamental level, seeking a way to make the interaction simpler, easier and more successful in the eyes of their customers. They do it by challenging the Moments of Truth in the process and eliminating as many as they possibly can. Moments of TruthYou’ve probably heard the term “Moments of Truth.” You may even use the concept in your own process work. You may even attribute the concept to someone in recent times. But the term Moments of Truth was first articulated as part of a management philosophy by a man named Richard Normann. Though not nearly as well known as other management thought leaders, Richard Normann holds a special place in the hearts of those you do know about him. Considered by many that do know him to be one of the most visionary business luminaries of our time, Richard Normann developed the foundation of customer-centric value chain thinking with Moments of Truth as the central idea around which his insights were built. It was Richard Normann that inspired Jan Carlzon. It was Richard Normann who brought to our attention the fact that the experiences we provide to our customers are primarily formed by these Moments of Truth, and that by owning and managing them we can transform the customer relationship into something that delights our customers, astounds our competitors and enthuses our employees. Our Context is our Limiting FrameworkPeople are motivated by their personal drive and character or by the context that surrounds them. While an economic “downturn” is unneeded motivation some, for others it’s these bumps in the road that get us up and out of our chairs moving, thinking and acting differently. In many ways a challenging business market is the business leader’s Moment of Truth. But Moments of Truth with our customers are what really matters and innovating around Moments of Truth in customer interactions represents the greatest opportunity to improve business success, create customer loyalty and expand market share. What are Moments of Truth? Moments of Truth are any – and every – interaction with the customer. First articulated by Richard Normann (considered by many to be one of the most profound and sophisticated management thinkers of our time) in the mid 1970’s, Moments of Truth are the heart and soul of the experience customers have with the businesses they patronize. They are also the singular most influential source of customer dissatisfaction. As a general rule that applies to virtually any industry, excess Moments of Truth are the number one limitation to businesses achieving their full potential. You may recall the SAS turnaround led by Jan Carlzon back in the early 1980’s. This is the most popularized use of Richard Normann’s customer value chain insights using Moments of Truth to challenge the way the business interacts with its customers. Where Normann theorized, Carlzon implemented and the astounding turnaround results led to Carlzon’s publication of the book Moments of Truth. The basic principle goes like this. When customers engage with a business for any reason they end up participating in a “process.” That process, as the customer experiences it (from their point of view), is the customer relationship. From the customer’s point of view there is nothing else. Each and every customer interaction in all of these “customer processes” will leave an impression on our customers and every single interaction is a potential point of failure. Customer dissatisfaction comes from two places, interactions the customer deems unnecessary and interactions the customer deems inappropriate or unsuccessful. In all of these interactions the customer is judge, jury and when needed, executioner. The business is not an equal partner in this relationship. The customer has all of the power simply because the customer can take their business elsewhere anytime they choose to do so. Sounds like our customers aren’t very reasonable and that we are at their whim or fancy however that may take them doesn’t it? That’s not how it works though. Customers are generally reasonable and they also act on comparisons. In its simplest form providing an experience that is better than our competitors is usually enough to tip the scales in favor of increased business success. Yet understanding the concept of Moments of Truth and the customer experiences we create can be very empowering to business leaders as a way to create significant differentiation. For example, if we asked the question “How might we innovate to increase the value of what we offer to our customers?” what might come of that? There are a number of companies that have done just that. Here are several examples. Discount TireLooking at the market from the customer point of view, in the 1960’s Discount Tire decided to run its business by creating a definition for success (customer success) that fulfilled an unmet need in the market. This led to Discount Tire running its business with low prices without up-sell, the employment of staff trained to give objective advice on tire selection appropriate to the needs and goals of each customer and prompt, courteous delivery of services. Those Moments of Truth in the typical up-sell and cross-sell “process” were suddenly gone.How do you get to $1.5 billion in revenue in a crowded, low-margin business like retail tires as a start-up? Not by adding in extra services. That is another big difference with Discount Tire. The company became an organization of tire experts. It only does tires and tire related services. You can’t get your oil changed at Discount Tire because oil changes are not related to tires. Another interesting move by Discount Tire was the decision to deliver flat tire repair to customers for free. Again, focusing on the customer and customer success, Discount Tire decided to give away a high-value (to the business) industry service - the repairing of customers’ flat tires - for free. Now having a flat tire is certainly a Moment of Truth for customers, but having those flats fixed for FREE is a Moment of Magic – eliminating the additional Moments of Truth we would normally encounter in attempting to get our flat tire fixed. Discount Tire is the company that fixes its customers’ flat tires for free, doesn’t up-sell, offers very low prices on a broad selection of tires, has objective tire experts for employees, and gets you in and out fast. That value proposition and delivery of success has turned the tire sales and service industry upside-down. Virgin Mobile USAVirgin Mobile entered the US market with a bang, by offering a newly defined cellular service value proposition - pay as you go (and more). Certainly the number one customer expectation that Virgin identified as a competitive differentiator was the elimination of the onerous “cellular service contract.” The service contract is a characteristic of the existing cellular service market loosely disguised as a “customer benefit.” This “benefit” is created by making non-contract service unattractive from a pricing standpoint. Why does the service contract exist in this form? It exists as a mechanism for cellular service providers to “push” a product of predetermined customer lifecycle duration because the industry as a whole has been unable to establish profitable customer lifecycle duration due to consistent failure on customer expectations. The practice (and product) exists as a mechanism to ensure some profit to the cellular service provider. Virgin saw this as an opportunity to define a new customer value proposition that would place it at a competitive advantage for a meaningful portion of the cellular service market. Virgin introduced a new service, pay as you go that requires no service contract. You simply pay as you go. If you documented the “process” you go through as a customer in “buying” cellular service in the traditional form you would find it fraught with Moments of Truth. It was not that long ago that most people experienced a “process” that took in excess of 30 minutes to complete just to get a cell phone and service. With Virgin Mobile USA’s approach it can literally take 5 minutes or less. Buy a phone, any phone you want, and go. Charge it any number of ways. No contracts, no credit history, no credit cards, very few Moments of Truth. The results of the strategy are compelling with Virgin Mobile USA touting an averaged growth rate of 1 million new customers per year. State Farm InsuranceLike a good neighbor, State Farm is there... and the company takes that motto very seriously. While it may be surprising to find that State Farm as a company believes very strongly in the unique and important purpose of insurance – the ability to mitigate the impact of unforeseeable and uncommon events by aggregating the risk over a large “pool” of people – this is exactly what they do believe. It makes sense, is a valuable service and stands alone as a business case, yet few insurance companies operate that way anymore. So for State Farm customers, when something “bad” happens State Farm is “there” with incredibly simple “processes” for getting whatever happened put to rights. You know the whole “good neighbor” thing comes from times past when a family would suffer a tragedy such as their house burning down. Their neighbors would take the distraught people into their homes then organize a “house raising” to get the family back into a new home quickly through the combined efforts of many. State Farm still retains much of that mindset, and their customer processes are very streamlined – often imposing no extra activity on the customer or even taking steps to reduce the activity the customer must do to restore things back to the way they were. When contrasted to one competitor, State Farm’s homeowner claims policy had 3 Moments of Truth while the competitor had 13. Which process do you think you would want to “experience” when you’re trying to get something fixed on your home? Virgin MoneyNot surprising to find another of Sir Richard’s companies on the list of companies that have innovated around the customer value chain and Moments of Truth. The most recent venture, Virgin Money, dramatically changes several customer processes and one interesting company process. The new offering goes like this. There are people who have extra money. There are also people that need extra money. Some of these people from these two groups know each other. Yet lending between “friends and family” is fraught with high risk on the investor’s side – because “friends and family” are NOT good at paying back loans. Enter Virgin Money. Need a loan from rich Aunt Bessie? Sure. Virgin Money will provide the service that initiates the loan to you just like any other Virgin loan. You get your money, Aunt Bessie actually gets paid back (with interest by the way) and Virgin Money makes money by seamlessly providing the financial services needed to make this work just like a loan from a bank. That completely changes the customer process for anyone getting a loan from a family member because the interest rate, terms and approval process are whatever the family member says it is... that’s quite a twist. For the family member with the money, they suddenly have a clearly priced service that makes it easy to put their extra money to work for them while it helps someone they want to help. So Aunt Bessie can now put her money to work for her without the fear of never seeing a penny of it again. It’s interesting too because this kind of service is likely to bring more working capital back into the economy that would otherwise be set back - protected. So depending on the uptake of this service it could have a positive impact on the global economy by increasing available capital in the marketplace. While that may not happen in any directly observable way it is likely to make some impact which is the final point – real innovation creates a value proposition that has positive knock-on effects. ROI Times TwoOf course it’s not just the increased value to the customer that drives these companies to do what they do. One of the biggest reasons they do “it” is because improving customer experiences (really improving them) has a double benefit. It decreases the cost of operations while improving the customer experience. That statement can start some arguments by people who believe that improving the customer experience requires more employees, more work and (heaven forbid) the willingness to give more to customers without increasing what the customer is charged. But consider this, when we are really improving the customer experience we are eliminating the reasons the customer needs to contact us and the Moments of Truth (interactions) that occur when we do. There’s only one way to do that, sophistication. Sophistication follows from the inspiration by Leonardo da Vinci (“simplicity is the ultimate sophistication”) where he was very intrigued by the challenge of resolving complex matters to their simple – shall we say quintessential – form. It’s like the chipping away of the unneeded parts of a stone to reveal the art form hidden underneath, refining by removing the “dross” if you will. That kind of refinement will dramatically reduce the work of the processes behind the “customer processes” – and that means they cost less for us to operate and maintain them. So when we really start improving these customer processes our operating and maintenance costs will also decrease – sometimes as much as 200 or 300% from where we started over several refinement iterations. How much do you think it costs Virgin Mobile USA to facilitate the sign-up of a new customer? Compared to say AT&T or Verizon? How much do you think it costs State Farm to implement the homeowners small claim process with 3 Moments of Truth compared to the competitor’s process with 13 Moments of Truth? We are hard pressed to get the return on investment of EITHER of these values that can be derived from innovation on the customer experience let alone both from the same set of actions! Eliminating Moments of TruthDiscount Tire changed the after-market tire industry in the United States by offering good quality tires at a low price without any up-selling or add-on services. Taking a customer process where people at the front lines of the tire sales business were participating in their company’s internal process of “selling more to every customer,” Bruce Halle changed that process with Discount Tire by participating the customer’s process by helping his customers get the tires they needed as simply, directly and inexpensively as possible. That move, that shift from the company’s process to the customer’s process, was a big enough deal to customers for Discount Tire to become the undisputed 800 pound gorilla in the after market tire industry. Most of the entrenched competitors don’t even exist any more. They were eliminated along with the Moments of Truth Discount Tire eliminated in the customer process. Steve Jobs and Apple have been very busy lately eliminating Moments of Truth in the interactions people have with Apple products. From notebook computers to music to phones – Apple is changing what people expect in regards to the device interaction they will have when they use Apple products. Simpler, easier and more successful. Elimination of every Moment of Truth we can possibly figure out how to eliminate. This is the difference that has catapulted Apple into suddenly being a serious market contender in entrenched, high-volume markets. Sir Richard Branson is very good at both eliminating Moments of Truth and giving front line employees the authority and responsibility to own successful customer relationships. Both activities actually eliminate many Moments of Truth, as the initial interaction with many of the Virgin companies is much simpler and giving authority and responsibility to front line staff enables them to resolve customer needs without spewing internal business rules out to customers or engaging in a lengthy internal process that leaves customers angry and frustrated. Why Can’t We Do That?So the question is, why are only a handful of companies able to do things like this? What is stopping the rest of us from following suit? Why can’t we do that? More than anything else it is a question of perspective. When we view our world from our own perspective we will act in accordance with our own wants and needs. When we place our (our organization’s) wants and needs first the customer experience will always suffer. Yet that will challenge our ability to get and keep our customers. The two are inextricably interlinked. They cannot be separated. Customers are either an object we are attempting to manipulate for our own desires or they are the reason we exist and we orient everything we do to making their lives as simple, easy and successful as possible. So while we can all understand that orienting on our customers is obviously the most opportunistic way for us to create business success the vast majority of organizations still manage themselves against their own wants and needs. They may have employees that champion the customer experience, making it be less onerous that perhaps it would be otherwise, but the mindset is a “me, me, me” mindset and that introduces a fundamental limit on the organization’s ability to move up the customer value chain. What are the Limits?Using innovation on Moments of Truth and the customer value chain really doesn’t have any limits. Being integral to our customers’ lives at new levels of value is only maxed out until the next innovation comes along. Like many things, because innovation is not a simple thing (nor is it something you just do every so often) we will continually reach plateaus where our thinking stymies for a while. The most innovative ideas come from questioning why something is “that way” – challenging ever assumption “that way” is based upon. Where did these assumptions come from? Often times we act on assumptions that have no firm foundation we can find, they are simply our “set of assumptions.” Innovation roots all of these assumptions out into the light of day to see if they are valid. For customer experiences the “assumptions” that must be challenged are each and every Moment of Truth that exists in every single customer process we create. The only real limits we face come from our limited observational perspective (more commonly referred to as “not being able to see the forest for the trees”) and our fear (translated as “perceived risk”) of the unknown. What if GM suddenly implemented a program that allowed customers to buy a new vehicle for a certain monthly price, with a new car of their choice every two years, and all maintenance, upkeep and repairs included? Just one low monthly price? How much would that change things? Could they do that? It’s like asking the cellular service providers, can we sell our service without contracts – just pay as you go? Before Virgin Mobile USA did it, they would have answered no. But even when we take ownership of those customer end-to-end processes we have to be brave, because once we own them we are responsible for making them “right” for our customers. Do you know what your customers experience now? Do you really know? Be careful how close you look, because once you look closely you will own them – and you will need to deal with their inadequacy. Limits to this kind of innovation come from us, the people who must do the innovating. Some people with turn away from the very idea (actually, many people will) because it’s just too different from what they already know. Any of us can bring innovation into our strategic activities and any of us can improve customer experiences, increase our value, and even raise ourselves up to a higher value chain. Any of us can, some of us will, and a few of us (like Sir Richard) will use it over and over and over again. Your Moment of TruthWhat would you do if you could take this concept to heart? What would change in your organization? How would this play out in the customer experiences your organization provides to its customers? Could you revolutionize customer value if you were given that opportunity? I hope that you could. Because for many of us that is where it starts. When we can’t get a corporate mandate to do what we obviously should do to help our organization succeed we can still challenge as many Moments of Truth as possible. We can still eliminate Moments of Truth whenever the opportunity arises. We can find opportunities to do this that wouldn’t find if we are looking for them. We can begin to change the customer experience from within one Moment of Truth at a time. And that, my friends, may lead to the opening of the door that will grant us permission to challenge all of the Moments of Truth around us. Terry Schurter Director International Process and Performance Institute Terry.schurter@ipapi.org |

| Written by Jon Pyke |

|

Situational Applications Provisioning and the Cloud The entire field of Computing is fast becoming a “cloud”—a collection of disembodied services accessible from anywhere and detached from the underlying hardware. There will be many ways in which the cloud will change businesses and the economy, most of them hard to predict, but one theme is already emerging. Businesses are becoming more like the technology itself: more adaptable, more interwoven and more specialized. These developments may not be new, but the advent of cloud computing will speed them up. According to IDC a quarter of corporate data centers in America have run out of space for more servers. For others cooling has become a big constraint and often utilities cannot provide the extra power needed for an expansion. IDC believed that many data centers will be consolidated and overhauled. Hewlett-Packard used to have 85 data centers with 19,000 IT workers worldwide, but expected to cut this down to six facilities in America with just 8,000 employees by the end of this year, reducing its IT budget from 4% to 2% of revenue. HP is not alone and the Perfect Storm will speed up this trend as companies strive to become more efficient. This Cloud of computing resources will not only effect the number of data centers and the number of people employed in them – it will have profound implications for the organization. On one level the cloud will be a huge collection of electronic services based on standards. Many web-based services are built to be integrated into existing business processes. IT systems will permit organizations to become more modular and flexible and this will lead to further specialization. In the Cloud it will become even easier to outsource business processes, or at least those parts of them where firms do not enjoy a competitive advantage. This also means that companies will rely more on services provided by others. Furthermore, there will be not just one cloud but a number of different sorts: private ones and public ones, which themselves will divide into general-purpose and specialized ones. People are already using the term “intercloud” to mean a federation of all kinds of clouds, in the same way that the internet is a network of networks. And all of those clouds will be full of applications and services. There will be many ways in which the cloud will change businesses and the economy, most of them hard to predict, but one theme is already emerging. In the current economic environment businesses will have to become more like the technology itself: more adaptable, more interwoven and more specialized. Situational Application Provisioning is a very different proposition from what we think of as applications it therefore represents a very different opportunity and is a mechanism whereby a user can put together an “application” based around normal working patterns, using readily available services. This means that is possible to handle any sort of business problem usually tackled by enterprise solutions by being able to leverage the capability to associate virtually any number of web services within the context of an application. Process Provisioning is effectively an application generator within a process and is inherently more flexible, easier to provide, easier to manage and easier to use than traditional “ERP” type products. According to Wikipedia, a situational application is software created for a small group of users with specific needs. The application typically has a short life span, and is often created within the group where it is used, sometimes by the users themselves. As the requirements of a small team using the application change, the situational application often also continues to evolve to accommodate these changes. Significant changes in requirements may lead to an abandonment of the situational application altogether – in some cases it is just easier to develop a new one than to evolve the one in use. The idea of end-user computing in the enterprise is not new. Development of applications by amateur programmers using IBM Lotus® Notes®, Microsoft® Excel spreadsheets in conjunction with Microsoft Access, or other tools is widespread. What's new in this mix is the impressive growth of community-based computing coupled with an overall increase in computer skills, the introduction of new technologies, and an increased need for business agility. Most software companies think on-demand applications (SaaS) are a replacement for traditional business software. They couldn’t be more wrong. Sure, these software-as-a-service (SaaS) applications are sold as a service and paid for per-transaction, but they are developed, sold and delivered in the same manner as traditional licensed software. There are two clear reasons for needing process technology to underpin the provision of these applications: 1. Rapid Innovation – Ra-In Clouds The initial thrust for the cloud is data center and “standard” applications such as SalesForce.com and Google Apps. But this is just the start – the cloud has significantly more potential than simply being able to provide specialized applications and flexible data storage. Gartner defines cloud computing as a style of computing where massively scalable IT-related capabilities are provided “as a service” using Internet technologies to multiple external customers. “During the past 15 years, a continuing trend toward IT industrialization has grown in popularity as IT services delivered via hardware, software and people are becoming repeatable and usable by a wide range of customers and service providers,” said Daryl Plummer, managing vice president and Gartner Fellow. “This is due, in part to the commoditization and standardization of technologies, in part to virtualization and the rise of service-oriented software architectures, and most importantly, to the dramatic growth in popularity of the Internet.” Plummer said that taken together, these three major trends constitute the basis of a discontinuity that will create a new opportunity to shape the relationship between those who use IT services and those who sell them. According to Gartner, The types of IT services that can be provided through a cloud are wide-reaching. And include: Gartner predicts that the impact of cloud computing on IT vendors will be huge. Established vendors have a great presence in traditional software markets, and as new Web 2.0 and cloud business models evolve and expand outside of consumer markets, a great deal could change. “The vendors are at very different levels of maturity,” said David Cearley, vice president and Gartner Fellow. “The consumer-focused vendors are the most mature in delivering what Gartner calls a ‘cloud/Web platform’ from technology and community perspectives, but the business-focused vendors have rich business services and, at times, are very adept at selling business services.” How do business take advantage of low cost, on demand, computing power to drive their business growth, make them more efficient and better able to compete in a global market economy? This is where SaaS enabled BPM platforms comes into play by providing an environment where business users and developers can work together to build new applications from scratch or by mashing up services that are widely and readily available in the cloud. These applications will almost certainly start out as situational or ad-hoc applications. These applications are important to the company but are not ones that are strategic line of business requirements. These applications are ideal for process centric deployment in the cloud. Why is this so? Despite the fact that these Situational Applications are not strategic they do have to be properly controlled. That means they have to be compliant, auditable, recorded and controlled. Furthermore there is every possibility that they will have to be fully integrated into some “on premise” applications or even outsourced processes – therefore they need to show proper and full corporate governance. For most, deploying these applications in the cloud is too radical unless they can be properly controlled and managed. Furthermore even though they do not involve large IT investment or involvement they cannot be allowed to flaunt corporate standards – building situational applications based on proper process control in the cloud is by far and away the only way to do it. In addition the organization will require access to On-premise Enterprise applications such as SAP, Oracle etc and these will be accessed through both Private and Public Business Application Clouds. The combination of the high availability of Cloud infrastructure at a low cost and innovative Cloud services means that the organization needs an Assembly and Orchestration layer in the Cloud to fully deliver useful business advantages. Benefits of this approach to the business users: The following situational applications might be considered to be the minimum requirement for true office administration type services that one might expect Application Provisioning to deliver. In order to truly leverage the power of the SaaS model, we need to reconsider the SaaS BPM proposition – we shouldn’t think of this as BPM as a Service, more a platform as a service, a redefined application server if you will. |

Jon Pyke was the Chief Technology Officer and a main board director of Staffware Plc from August 1992 until October 2003. He demonstrates an exceptional blend of Business/People Manager; a Technician with a highly developed sense where technologies fit and how they should be utilized. Jon is a world recognized industry figure; an exceptional public speaker and a seasoned quoted company executive.

Jon Pyke was the Chief Technology Officer and a main board director of Staffware Plc from August 1992 until October 2003. He demonstrates an exceptional blend of Business/People Manager; a Technician with a highly developed sense where technologies fit and how they should be utilized. Jon is a world recognized industry figure; an exceptional public speaker and a seasoned quoted company executive. Keith Ayers is the President of Integro Leadership Institute LLC, a leadership consulting group based in West Chester, PA. Originally from Australia, Keith joined Integro as a consultant in 1977 and took over the ownership of the organization in 1982. Demand for his programs and expertise in the United States led him to move to Pennsylvania in August 2001. The U.S. division of Integro now has over 60 certified associates across North America.

Keith Ayers is the President of Integro Leadership Institute LLC, a leadership consulting group based in West Chester, PA. Originally from Australia, Keith joined Integro as a consultant in 1977 and took over the ownership of the organization in 1982. Demand for his programs and expertise in the United States led him to move to Pennsylvania in August 2001. The U.S. division of Integro now has over 60 certified associates across North America. Keith Swenson

Keith Swenson PETER FINGAR is an internationally recognized expert on business strategy, globalization and business process management. He's a practitioner with over thirty years of hands-on experience at the intersection of business and technology.

PETER FINGAR is an internationally recognized expert on business strategy, globalization and business process management. He's a practitioner with over thirty years of hands-on experience at the intersection of business and technology. Max. J. Pucher is the Founder and current Chief Architect of ISIS Papyrus Software, an independent software vendor focused on business content (documents?) and process management. Our speciality is to utilize Machine Learning technology to reduce the amount of programming needed to analyze and create content and related processes. Our key concept is that there are no processes without content and content without process is irrelevant. Of course there is documentation and marketing content that is not related to a business process instance, but still needed to perform a certain process. I challenge anyone here to provide me with proof that content and process are not the same. It is not a one-to-one relationship as multiple content may make up a single process or mulitple processes may use the same content. I also claim right here that processes are always in the need of working with inbound and outbound content, except odd processes such as vacation authorization. The need for complex process links disappears when content state controls process progression. I therefore do not see the need for standalone BPM products that only create substantial integration and programming efforts. I propose that businesses need a consolidated approach for ECM, BPM, CRM, as well as business rules and operational business intelligence which Forrester Research calls a DBA or Dynamic Business Application.